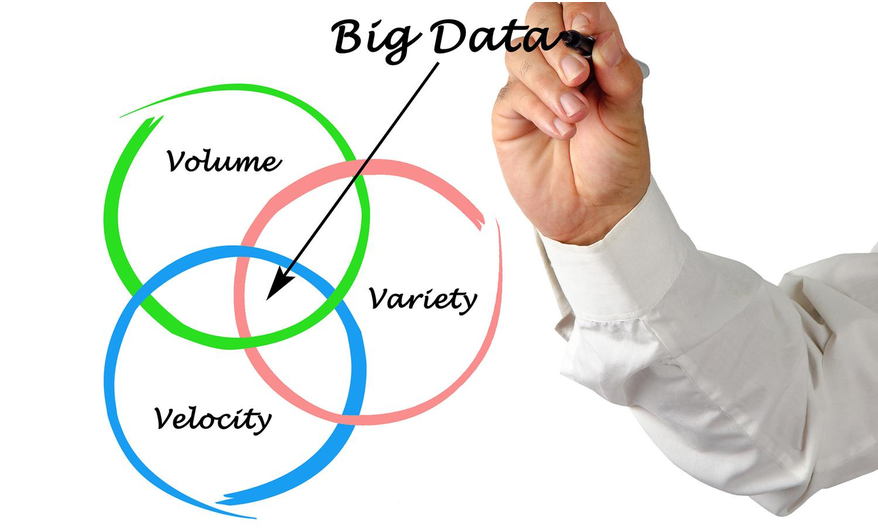

Big Data involves data sets of such large sizes that they require special handling. Everyone is talking about Big Data these days, so what is it that makes it so special?

Here’s a list of the ten best features of big data that make it a vital tool for today’s business environment.

Jump to Section

1. Volume

The key feature that makes Big-Data big data, is that it’s big, of course. This is one of the best features of Big Data. Large data sets provide lots of opportunities for analytics.

Where data sets might have previously been in the kilobytes, then megabytes, and gigabytes, the accepted starting point for a big data set is one terabyte. A dataset that starts at the terabyte level cannot be processed on one computer. This is a generally accepted defining point of big data, as it requires a distributed network like, for example, Hadoop or MPP.

Of course, the relative volume and single computing power can be safely predicted to increase, after all, Bill Gates at one point said 500kB should be enough for anyone. Going back further, experts predicted a worldwide demand for 5 computers as a saturation point. In the future, we might be able to handle big data volumes on a single computer, this is in fact another attribute of Big Data (see point 9), its ability to drive growth.

2. Variety

One of the next most attractive features of Big Data is the variable nature. Big Data includes structured and unstructured data. The data can be extremely diverse in its nature, including multiple format types and a multitude of variables. Variety means new data relationships may be established. Data-mining techniques can be used to find matches and patterns within the sets, which without Big Data we would otherwise never have discovered.

The variety of Big Data has lots of applications in new business developments but has its most important applications in research, particularly medical research.

3. Velocity

The quality of velocity in Big Data relates to two concepts. The rapid collection of data sets as byproducts of digital processes, or through applied measuring techniques, and the ability to rapidly process the large data collected in real-time, or very soon after collection.

This means we are building more and more useful data sets quickly. The speed at which they are being collected continues to help solve future problems, drive research and technology, assist in raising living standards, and improve safety. Quick collection and processing brings results to those that need them, when they need them, and so expands the reach of the data.

4. Remaining Vs

I’ve lumped the remaining Vs together as an attribute, as they’re all sort of smaller attributes adding to the overall picture, and also there is a great deal of variance in these, humorously as this includes variability itself, usually termed the 4th V, but some consider it a version of Variety.

Value is usually the 5th V. Big Data sets need to provide value, not just data for the sake of data, even if this is latent.

Many go on to mention validity, which is quite an important component of any data set. The two I’m going to talk about are here a bit more as key attributes in the remaining Vs of big data that may not always be thought of this way are veracity and vulnerability.

Veracity refers in a simplified way to the error qualities or truthfulness of a set of figures. But error qualities themselves can lead to better learning. For example, common errors in colloquial speech lead to better error recognition for use in autocorrect and the development of new language patterns. Veracity also helps prove anti-thesis, proving what is not right, alongside what is right, which is the basis of Bayesian logic, a fundamental concept in data analysis.

Vulnerability reminds us that Big Data hacks can cause big problems. When we’re aware of the consequences, data protection mechanisms can be stepped up. The vulnerability can also prompt us to use big data itself for fraud profiling, protection, and catching cybercriminals in the act.

5. Storytelling

One of the great attributes of Big Data is its ability to tell us a story from the patterns we uncover. Thinking about data as a story allows us to delve into the key characters, their purpose, motivations, and effect. Since Big Data is voluminous and variable (points 1 and 2), each character is capable of providing enough factors for a full set of investigations and analysis to piece together plots and sub-plots.

An insurance company might be analyzing data on their policyholders and claims. Featured characters might include health care providers, assessors, policyholders, third-party claimants, and police. As we look at different plot lines, like for example the plotline of car accident claims, we see more characters emerge and more avenues for investigation and mitigation. For example, driver education, road safety policy, claims fraud investigators are some such characters and avenues.

Another example is the ability of supermarket shopping cart contents to tell us a story about the family or individual behind it. Are they entertaining guests, are they suddenly living alone, have gone on a diet, started school, or are expecting?

Big Data tells the story behind each character and helps develop their plot. Plots enable data models and predictions.

6. Hidden Opportunities

Big Data can provide opportunities for data mining to discover information that was previously unknown. Big Data includes structured data, unstructured data, and dark data.

Unstructured data was previously very costly to process. However, it makes up around 80% of available data. Unstructured data may include images, video and audio, satellite data, atmospheric data, social media, emails, most web content, and many other sources.

Dark data is data that we don’t use for any specific purpose and is often unstructured. Without big data techniques, it was not considered productive to process.

Processing of unstructured and dark data can provide new insights and open up new business opportunities. Big Data technology is providing the ability to process and learn from these previously untapped resources.

7. Time

Using Big Data cuts down the time it takes to find a pattern or solution. Big Data methodology has made the processing of irregular items much faster.. For example, language recognition in comments and text with the assistance of machine learning has become far quicker, where previously this would be a difficult time-consuming task for a database with strict rules. This means that data insights can be available to us quicker than ever before.

The speed with which Big Data can help us find solutions provides immense benefit in commercial applications and real-time processing.

7. Modeling Capability

Large data sets provide a better capability to build models and algorithms. With a model, we can determine how each factor influences the outcome, enabling better predictions to be developed. With modeling and algorithms, we can make better decisions and create better services. A good example of this is the complex search algorithms that go into Google’s search results, which are calculated in seconds.

Large data sets provide opportunities for complex modeling such as neural networks which form the basis of many machine learning applications. Only with very large data sets can we develop and validate machine learning to solve complex non-linear problems.

Big data sets also allow flexible modeling, where, with non-relational databases modeling that can be done at the query stage, or data output stage, rather than forced at the data input stage which was a pre-requisite of standard relational databases.

Having flexible models at the output means our possible outcomes are far more diverse and capable of much more powerful results than traditional models.

9. Data Technology Growth

There is no doubt that the growth of data sets into massive amounts of Big Data that is used in multiple commercial and public sectors has allowed for impressive growth in the data technology industry.

Data systems, such as non-relational data management, virtualization, real-time processing, and machine learning are among the many advances in data technology that Big Data has fostered.

Big Data has enabled growth in the data sector through proof of monetary benefits. It has spawned new industries and prompted research breakthroughs. The fact that the data technology developed often pays for itself drives more growth.

10. Problem-Solving Capability

The final, and I think overall best, feature of big data is its ability to solve problems. Big Data helps us solve problems through the clues it provides us by its nature of volume, variety, and velocity.

Predictive, diagnostic, and preventative data analysis needs large data sets to draw accurate conclusions. The larger the data set, the easier it is to identify problems and solve them.

With large data sets, we can project potential problems and solve them before they happen. For example, you can analyze large amounts of engine data for trends in oil or fuel consumption that then trigger maintenance events. Knowing what maintenance events link to what changes in engine parameters can be answered by historical cross-analysis of both data sets.

Another example of problem-solving with big data is the detailed trending of electricity and water usage from Big Data analysis. This data is helping supply companies ensure shortages don’t occur by applying proper management of resources before a crisis level hits. Law enforcement has used big data to help solve crimes and to use resources effectively to target locations and causes of crime hotspots. Problem-solving is the most helpful attribute of big data for individuals and businesses.

- Business Intelligence Vs Data Analytics: What’s the Difference? - December 10, 2020

- Effective Ways Data Analytics Helps Improve Business Growth - July 28, 2020

- How the Automotive Industry is Benefitting From Web Scraping - July 23, 2020