Accessing data can be done in a number of ways. The common methods used are browsing, using API, and just parsing the web pages when provided with the code. The lasting method has been referred to as web scraping. The second method is only applicable if the website you want to extract data provides such a system.

It is important to note that web scraping is a recent field that has realized active developments and milestones that have a common goal. These developments also have a semantic in web and data visualization. It has been regarded as an ambitious initiative that still is in the process or in need of breakthroughs in text processing, artificial intelligence, human-computer interactions, and semantic understanding.

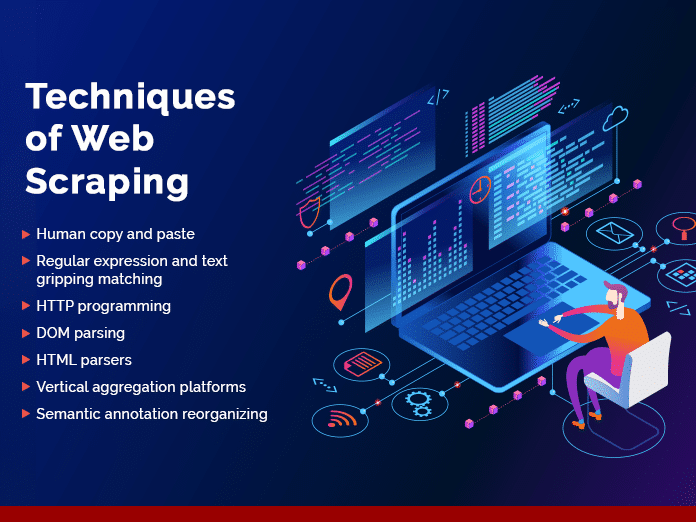

Web scraping has often been regarded to favor practical solutions by basing them on relevant technologies and that have been previously been ad hoc. It is important to note that there are a number of different levels in which the web scraping technologies employed by Loginworks can be used.

Jump to Section

1. Human Copy and Paste

Most have argued that even the best web scraping technology may not be able to perform or surpass manual examination and the copy and paste techniques. Web scraping, therefore, becomes the ultimate solution in getting the right information on a given niche over a short time.

2. Regular Expression and Text Gripping Matching

This can be a simple technique but yet a powerful method of extracting data or information from the internet. Most web pages may be based on the UNIX grip command or even regular-expression matching resources of the commonly used programming languages.

The common ones being for instance Perl and Python. With this technique of web scraping, it is important to realize that a lot of information can be obtained by our web scraping services in this way.

3. HTTP Programming

It may sometimes be a real challenge in retrieving information from dynamic and static web pages. Our web scraping adequately caters to this and thereby guarantees data from such sites. This may be done by posting HTTP requests to the remote servers by using socket programming.

By this, we ensure our clients will get accurate data that may present a challenge to obtain from such pages.

4. DOM Parsing

By use of embedded full-fledged web browser like Internet Explorer or even Mozilla web browser control, you are able to retrieve dynamic contents that have been generated by Client-side scripts.

It is important to realize that these programs of our web scraping services can also parse web pages into the DOM tree by basing the argument. On parts that can be retrieved from parts of the web pages.

5. HTML Parsers

This is some of the semi-structured data query languages which may include XQuery and even the HTQL which can be used in parsing HTML pages and thereby retrieving and transforming the web content. Our web scraping services are dedicated to getting all the information for your business including the HTML pages.

6. Vertical Aggregation Platforms

By web scraping, several companies have been developed by vertical and specific harvesting platforms. These platforms are meant to create and monitor numerous bots that are meant for specific verticals.

By use of this technique, the preparation is done by establishing the knowledge base meant for the entire vertical and then create platforms automatically.

We measure our platforms by the quality of information that is obtained. This ensures that the robustness of our platforms is getting quality information and not just chunks of useless data.

7. Semantic Annotation Reorganizing

Our web scraping services even cater for web pages that embrace semantic or metadata markup and annotations which may be meant in locating specific snippets. The annotations may be embedded in the pages and this may be seen as DOM parsing. Our web scraping service can retrieve data instructions from any layer of web pages.

Final Words

Loginworks is dedicated to getting quality and enough data to enable a company to make sound decisions. We employ a number of techniques based on the information you want and the complexity of the web pages that are in the target.

- LinkedIn Scraper | LinkedIn Data Extractor Software Tool - February 22, 2021

- Is Web Scraping Legal? - February 15, 2021

- What Is Data Scraping? - February 10, 2021