In this article, I’m going to explain five different types of data processing. The first two, scientific and commercial data processing, are application specific types of data processing, the second three are method specific types of data processing.

First a quick summary of data processing: Data processing is defined as the process of converting raw data into meaningful information.

Jump to Section

Data processing can be defined by the following steps

- Data capture, or data collection,

- Data storage,

- Data conversion (changing to a usable or uniform format),

- Data cleaning and error removal,

- Data validation (checking the conversion and cleaning),

- Data separation and sorting (drawing patterns, relationships, and creating subsets),

- Data summarization and aggregation (combining subsets in different groupings for more information),

- Data presentation and reporting.

There are different types of data processing techniques, depending on what the data is needed for. Types of data processing at a bench level may include:

- Statistical,

- Algebraical,

- Mapping and plotting,

- Forest and tree method,

- Machine learning,

- Linear models,

- Non-linear models,

- Relational processing, and

- Non-relational processing.

These are methodology and techniques which can be applied within the key types of data processing.

What we’re going to discuss in this article is the five main hierarchical types of data processing. Or, in other words, the overarching types of systems in data analytics.

Data Processing by Application Type

The first two key types of data processing I’m going to talk about are scientific data processing and commercial data processing.

1. Scientific Data Processing

When used in scientific study or research and development work, data sets can require quite different methods than commercial data processing.

Scientific data is a special type of data processing that is used in academic and research fields.

It’s vitally important for scientific data that there are no significant errors that contribute to wrongful conclusions. Because of this, the cleaning and validating steps can take a considerably larger amount of time than for commercial data processing.

Scientific data processing needs to draw conclusions, so the steps of sorting and summarization often need to be performed very carefully, using a wide variety of processing tools to ensure no selection biases or wrong relationships are produced.

Scientific data processing often needs a topic expert additional to a data expert to work with quantities.

2. Commercial Data Processing

Commercial data processing has multiple uses, and may not necessarily require complex sorting. It was first used widely in the field of marketing, for customer relationship management applications, and in banking, billing, and payroll functions.

Most of the data caught in these applications is standardized and somewhat error proofed. That is capture fields eliminate errors, so in some cases, raw data can be processed directly, or with minimum and largely automated error checking.

Commercial data processing usually applies standard relational databases and uses batch processing. However, some, in particular, technology applications may use non-relational databases.

There are still many applications within commercial data processing that lean towards a scientific approach, such as predictive market research. These may be considered a hybrid of the two methods.

Data Processing Types by Processing Method

Within the main areas of scientific and commercial processing, different methods are used for applying the processing steps to data. The three main types of data processing we’re going to discuss are automatic/manual, batch, and real-time data processing.

3. Automatic versus Manual Data Processing

It may not seem possible, but even today people still use manual data processing. Bookkeeping data processing functions can be performed from a ledger, customer surveys may be manually collected and processed, and even spreadsheet-based data processing is now considered somewhat manual. In some of the more difficult parts of data processing, a manual component may be needed for intuitive reasoning.

The first technology that led to the development of automated systems in data processing was punch cards used in census counting. Punch cards were also used in the early days of payroll data processing.

The Rise of Computers for Data Processing

Computers started being used by corporations in the 1970s when electronic data processing began to develop. Some of the first applications for automated data processing in the way of specialized databases were developed for customer relationship management (CRM) to drive better sales.

Electronic data management became widespread with the introduction of the personal computer in the 1980s. Spreadsheets provided simple electronic assistance for even everyday data management functions such as personal budgeting and expense allocations.

Database Management

Database management provided more automation of data processing functions, which is why I refer to spreadsheets as a now rather manual tool in data management. The user is required to manipulate all the data in a spreadsheet, almost like a manual system, only the calculations are automated. Whereas in a database, users can extract data relationships and reports relatively easily, providing the setup and entries are correctly managed.

Autonomous databases now look to be a data processing method of the future, especially in the commercial data processing. Oracle and Peloton are poised to offer users more automation with what is termed a “self-driving” database.

This development in the field of automatic data processing, combined with machine learning tools for optimizing and improving service, aims to make accessing and managing data easier for end-users, without the need for highly specialized data professionals in-house.

4. Batch Processing

To save computational time, before the widespread use of distributed systems architecture, or even after it, stand-alone computer systems apply batch processing techniques. This is particularly useful in financial applications or where data requires additional layers of security, such as medical records.

Batch processing completes a range of data processes as a batch by simplifying single commands to provide actions to multiple data sets. This is a little like the comparison of a computer spreadsheet to a calculator in some ways. A calculation can be applied with one function, that is one step, to a whole column or series of columns, giving multiple results from one action. The same concept is achieved in batch processing for data. A series of actions or results can be achieved by applying a function to a whole series of data. In this way, computer processing time is far less.

Batch processing can complete a queue of tasks without human intervention, and data systems may program priorities to certain functions or set times when batch processing can be completed.

Banks typically use this process to execute transactions after the close of business, where computers are no longer involved in data capture and can be dedicated to processing functions.

5. Real-Time Data Processing

For commercial uses, many large data processing applications require real-time processing. That is they need to get results from data exactly as it happens. One application of this that most of us can identify with is tracking stock market and currency trends. The data needs to be updated immediately since investors buy in real-time and prices update by the minute. Data on airline schedules and ticketing and GPS tracking applications in transport services have similar needs for real-time updates.

Stream Processing

The most common technology used in real-time processing is stream processing. The data analytics are drawn directly from the stream, that is, at the source. Where data is used to draw conclusions without uploading and transforming, the process is much quicker.

Data Virtualization

Data virtualization techniques are another important development in real-time data processing, where the data remains in its source form, the only information is pulled for the needs of data processing. The beauty of data virtualization is where transformation is not necessary, it is not done, so the error margin is reduced.

Data virtualization and stream processing mean that data analytics can be drawn in real-time much quicker, benefiting many technical and financial applications, reducing processing times and errors.

Other than these popular Data processing Techniques there are three more processing techniques which are mentioned below-

6. Online Processing

This data processing technique is derived from Automatic data processing. This technique is now known as immediate or irregular access handling. Under this technique, the activity by the framework is prepared at the time of operation/processing. It can be viewed easily with the continuous preparation of data sets. This processing method highlights the fast contribution of the exchange of data and connects directly with the databases.

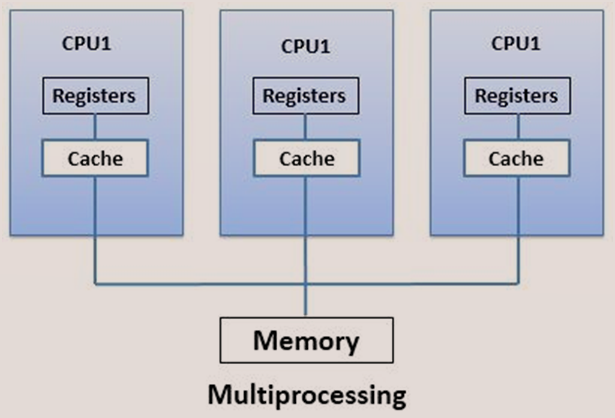

7. Multi Processing

This is the most commonly used data processing technique. However, it is used all over the globe where we have computer-based setups for Data capture and processing.

As the name suggests – Multiprocessing is not bound to one single CPU but has a collection of several CPUs. As the various set of processing devices are included in this method, therefore the outcome efficiency is very useful.

The tasks are broken into frames and then sent to the multiprocessors for processing. The result obtained is expected to be in less time and the output is increased. The additional benefit is every processing unit is independent, thus failure of any will not impact the working of other processing units.

8. Time Sharing

This kind of Data processing is entirely based on time. In this, one unit of processing data is used by several users. Each user is allocated with the set timings on which they need to work on the same CPU/processing Unit.

Intervals are divided into segments, and thus to users, so there is no collapse of timings which makes it a multi-access system. This processing technique is also widely used and mostly entertained in startups.

Quick Tips to Analyze Best Processing Techniques

- Understanding your requirement is a major point before choosing the best processing techniques for your Project.

- Filter your data in a much more precise manner so you can apply processing techniques.

- Recheck your filtered data again in a way that it still represents the first requirement and you don’t miss out on any important fields in it.

- Think about the OUTPUT which you would like to have so you can follow your idea.

- Now you have the filter data and the output you wish to have, check the best and most reliable processing technique.

- Once you choose your technique as per your requirement, it will be easy to follow up for the end result.

- The chosen technique must be checked simultaneously so there are no loopholes in order to avoid mistakes.

- Always apply ETL functions to recheck your datasets.

- With this, don’t forget to apply a timeline to your requirement, as without a specific timeline, it is useless to apply energy.

- Test your OUTPUT again with the initial requirement for better delivery.

Summary

This has been a little bit of an introduction to some of the different types of data processing. If you like what you’ve read here and want to learn more, take a look around on our blog for more about data processing systems.

- Business Intelligence Vs Data Analytics: What’s the Difference? - December 10, 2020

- Effective Ways Data Analytics Helps Improve Business Growth - July 28, 2020

- How the Automotive Industry is Benefitting From Web Scraping - July 23, 2020

This is a good effort. Please let me know, is “data processing techniques” and “data processing methods” is the same thing?

Require Data Processing System