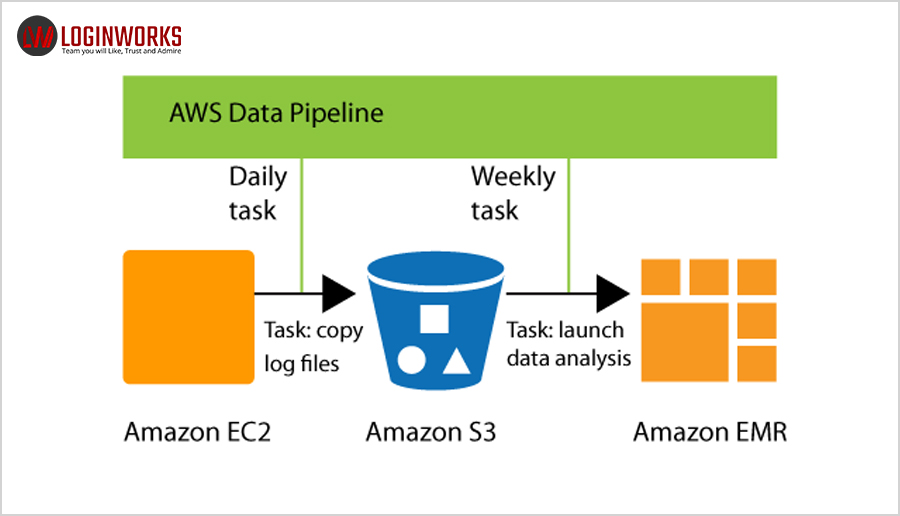

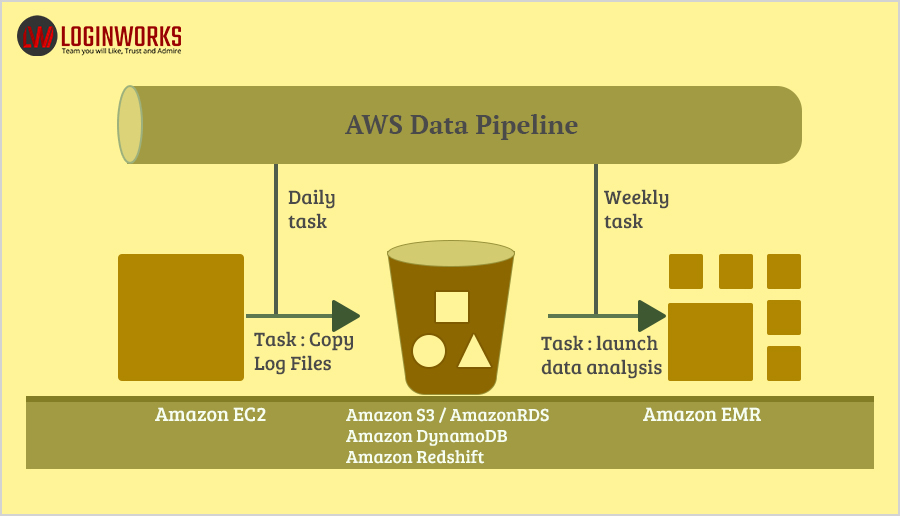

Amazon Web Services (AWS) is the platform of cloud web hosting on Amazon that offers reliable, flexible, scalable, convenient and cost-efficient solutions. AWS Data Pipeline is a type of web service that is designed to make it more convenient for users for the integration of data that is spread across several AWS services for analysis from a single location. With the employment of AWS data pipeline, the data can be accessed, processed and then proficiently transferred to the AWS services.

AWS data processing pipeline is a tool that enables the processing and movement of data between storage and computation services on the public cloud of AWS and the resources on the premises. The data processing pipeline oversees and streamlines data-driven work processes, which incorporates scheduling of data movement and preparing. The service is helpful for clients who need to migrate data along a specific pipeline of sources, goals and data handling tasks. By making use of a template of data processing pipeline information can be conveniently accessed, processed, and automatically transferred to another service or system. The data pipeline can be accessed through the console of AWS management or through the command line interface or the service application programming interfaces.

The AWS data pipeline performs tasks such as command line scripting and SQL querying. Resources can be managed by the developer or the AWS data processing pipeline can be used for resource management with enhanced efficiency, for instance, Amazon EC2 and clusters of Amazon Elastic MapReduce. The AWS data pipeline is suitable for work processes that are already optimized for the web service but can also be connected to the data sources on premises as well as to third-party sources of data.

Jump to Section

A Few Features of the AWS Data Pipeline

- Deployment within the infrastructure of AWS that is highly available and well distributed.

- Provision for drag and drop console feature within the interface of AWS

- Support system for handling of errors, tracking of dependency and scheduling

- Distribution of work from one machine to several other machines

- Billing is done on the basis of use level, charged monthly.

- Selection of the resources of computation those are required for the execution of data pipeline logic.

How to get started with AWS data processing pipelines?

The AWS data processing pipeline can be accessed through the console of AWS management, the command line interface of AWS or the service APIs. Amazon has got this covered by offering a series of AWS data pipeline tutorials to facilitate the efforts.

Process the data using Amazon EMR with Hadoop Streaming

- In this process, a pipeline is created for a simple cluster of Amazon EMR for running an existing job of Hadoop Streaming provided by the Amazon EMR and then an Amazon SNS notification is transmitted that indicates that the task has been successfully completed.

- The Amazon EMR cluster resource that is used here for the task is provided by the AWS data processing pipeline.

- The clusters that are so spawned are displayed in the console of Amazon EMR and charged to the user’s AWS account.

- The pipeline objects used includes EmrActivity, EmrCluter, Schedule, and SnsAlarm.

Importing and Exporting of DynamoDB data using the AWS data processing pipeline

This involves the migration of schema-less data into and out of the Amazon DynamoDB by employing the AWS data processing pipeline.

Copying CSV data amongst Amazon S3 buckets by employing the AWS data processing pipeline

- This involves the process of creation of a data pipeline for copying data from one Amazon S3 bucket to another.

- After that, an Amazon SNS notification is sent to indicate that the copying task has gotten successfully completed.

- The objects used by the pipeline includeCopyActivity, Schedule, EC2Resource, S3DataNode, and SnsAlarm.

Exporting MySQL Data to Amazon S3 by employing the AWS data processing pipeline

- This involves the creation of a data pipeline for copying data from a MySQL database table to a CSV file in an Amazon S3 bucket,

- After which a notification of Amazon SNS is sent that indicates that the copying has been successfully completed.

- The copy task makes use of an EC2 instance that is offered by the AWS data processing pipeline.

- The objects that are used by the AWS data processing pipeline include CopyActivity, EC2Resource, MySqlDataNode, S3DataNode, and SnsAlarm.

Copying the data to Amazon Redshift by employing the AWS data processing pipeline

In this process, a pipeline is created that periodically migrates data to Amazon Redshift from Amazon S3 using the template called Copy to Redshift in the console of AWS data pipeline or by using the file of pipeline definition within the command line interface. The web service Amazon S3 enables the user in storing data in the cloud. Amazon Redshift provides service of data warehousing in the cloud.

Steps of Building a Data Pipeline

A pipeline defines the communication of business logic to the AWS data processing pipeline. The information that it possesses consists of

- Names, formats, and locations of every data source

- Tasks that are executed for the transformation of data

- The schedules of these activities

- The resources that run the preconditions and activities

- The preconditions and terms that need to be fulfilled before scheduling the tasks or activities

- The different ways to alert the user with regular updates on the status of task completion throughout the process of pipeline execution

It is the AWS data processing pipeline that determines the activities, arranges for scheduling them and then makes for the assignment of the tasks to the task runners. If the task does not get successfully completed then the AWS data processing pipeline retries the task again and again in accordance to the user instructions and then reassigns the same task to a different task runner for successful completion. If the task fails to get completed again then the pipeline can be configured to notify the same and then take the necessary action.

The creation of a pipeline definition takes place in the following ways-

- A pipeline can be graphically created by making use of the console of AWS data processing pipeline

- A pipeline can be textually created by writing a JSON file in a format that is employed in the command line interface

- A pipeline can be programmatically created by calling the web service with either the AWS SDKs or the AWS data processing pipeline application programming interface.

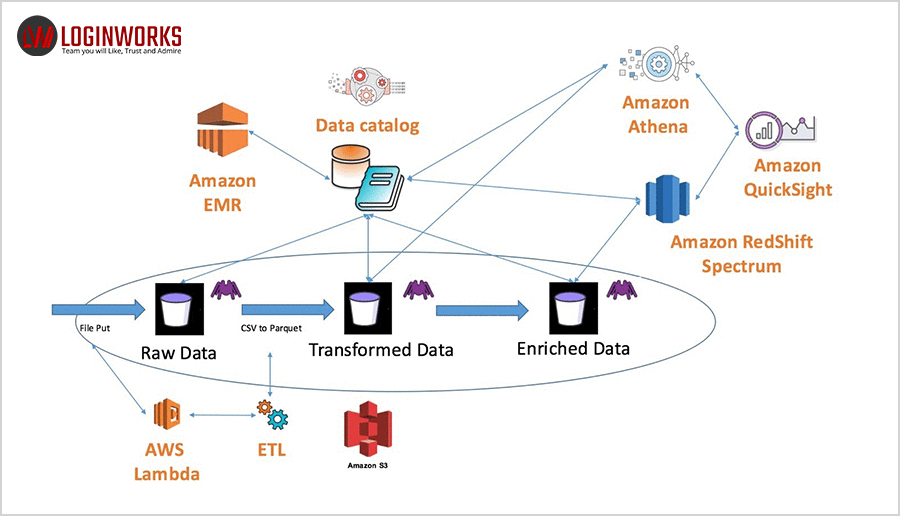

The characteristic components of the pipeline definition include-

Data Nodes: The location of input data for an activity or the location of storage of output data.

Activities: A definition of the task to be performed with adherence to a schedule by employing a computational resource and data nodes of input and output.

Preconditions: These are a group of conditional statements and terms that need to be fulfilled before the task can be run.

Scheduling pipelines: This defines the exact time of an event that is scheduled to be run.

Resources: This is the resource of computation that performs the activities defined by the pipeline.

Actions: When specific conditions occur during the processing of activities like a failure of completion, an action is triggered to notify the user.

Implement the following steps for setting up a data pipeline-

Creation of the pipeline

- Register yourself with the AWS by signing in to the AWS account. Use the link given here to open the AWS data pipeline console: https://console.aws.amazon.com/datapipeline/

- Select that region in the bar of navigation.

- Click on the button that says “Create New Pipeline”

- Fill out the relevant details in all the fields.

- Choose Build in the Source field using a template and then make this template your selection by using ShellCommandActivity. Only upon selection of the template does the Parameters section get opened.

- The Shell command and S3 input folder should be left to run with their default values.

- Click on the folder icon beside S3 output folder and select the buckets.

- Leave the values as default values in the Schedule.

- Let the logging remain enabled in the Pipeline Configuration. Click on the folder icon under S3 location for the logs and select the buckets.

- Let the IAM roles values remain as default under Security/Access. Click on the Activate button.

How can the data processing pipeline on AWS be deleted?

With the deletion of the pipeline, all the objects associated with it get deleted as well. Follow the steps mentioned below in order to delete a pipeline.

Step 1: Select the pipeline to be deleted from the list of pipelines.

Step 2: Click on the Actions button and select Delete.

The selected pipeline will be deleted along with the objects linked to it.

Characteristics of AWS Data Processing Pipeline

- Convenience and cost-effectiveness: The simple and easy drag and drop feature makes the creation of a data processing pipeline on the console highly convenient. A whole collection of pipeline templates is provided by the visual pipeline creator of the AWS data pipeline. These pipeline templates make the creation of pipelines much simpler for such tasks that include processing of log files, recording required data to Amazon S3 and so on.

- High reliability: The infrastructure of AWS data pipeline is designed in such a way for the execution of fault-tolerant activities. If the data source or activity logic is affected with any kind of flaws then the data pipeline reruns the activity automatically. If the flaw persists, then the pipeline sends a notification of the same that can be configured for alerting in the events of successful running, delay in activity, failure etc.

- High flexibility: The AWS data pipeline makes provision for a variety of features such as tracking, scheduling, handling of errors etc. The data pipeline can be duly configured for the implementation of actions like the execution of SQL queries directly against the databases, running Amazon EMR tasks, execution of custom applications, running on Amazon EC2 etc.

Conclusion

AWS data processing pipelines are a basic piece of the guide, design, and tasks of anybody hoping to utilize information as a vital resource. Given the variety of qualities and a wide range of data sources, this can be a test for even the most modern groups of product managers, accountants, arrangement architects and system engineers. If data is a well-organized asset, at that point data pipelines are required to make provision for the connection with data spread across various frameworks. This guarantees that valuable time of analysts, data scientists and engineers does not get unnecessarily wasted.

- Business Intelligence Vs Data Analytics: What’s the Difference? - December 10, 2020

- Effective Ways Data Analytics Helps Improve Business Growth - July 28, 2020

- How the Automotive Industry is Benefitting From Web Scraping - July 23, 2020