Jump to Section

Introduction

Relational Database Management System (R-DBMS) is a structural database, which generally supports temporary tables. These tables can be created at runtime phase.

The major difference between the permanent table and temporary is that permanent table either can be created by developer or user’s specification but it remains the same in the database until you delete or drop the table. On the other hand, Temporary tables stores their data in the TempDB folder in object explorer window and it gets deleted automatically when no longer used.

You can use all the main commands like SELECT, UPDATE and DELETE commands. The temporary tables are very useful in some ways to keep important temporary data for the temporary time period.

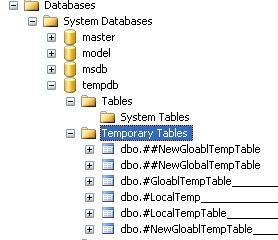

Storage Location in Database

Temporary tables are always stored in tempdb database folder. When we create a temporary table it straightly goes inside the folder “Temporary Tables”. Aas you can the image given below.

Types of Temporary tables

Temporary tables contain two types which are as follows

- Local Temporary tables

- Global Temporary tables

Local Temporary Tables

Local temporary tables are easily available to the user for the current connection. And, it gets deleted automatically when user instances no longer are used for the table connection. Therefore, Local temporary tables contain # symbol in the database.

How to Create a Local Temporary Table

A local temporary table is similar to a normal table. There you add one on prefix of the table name with 1 pound (#) sign. For clarification to know about, #PersonDetails is a local temporary table, with containing Id and Name column name. So, let’s create the table as given below.

Create Table #PersonDetails(Id int, Name nvarchar(20))

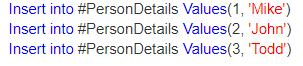

Insert Data into the temporary table:

Select the data from the temporary table:

Select * from #PersonDetails

How to check if the local temporary table is created?

Once you create a temporary table, it is always stored in the tempdb folder in the object explorer window. Query the sysobjects system table in TEMPDB. The table name shows suffix name with a lot of underscores and a random number verification. For this purpose, you can use the LIKE operator with them.

Select name from tempdb.sysobjects

where name like ‘#PersonDetails%’

You may also check the existence of newly added temporary tables by object explorer. In the object explorer window, must expand tempdb database folder, and then after you need to expand temporary table folder. Finally, you will get a temporary table that is created by you.

A local temporary table is accessible only for the connection that is created the table. If you ever open other query database window and execute the same query, then you get an error statement like ‘Invalid object name #PersonDetails’. Hence proved that local temporary tables accessible, only for the connection that is created the table.

A local temporary table automatically deletes itself when the connection contains no use of it. If the similar user wants to drop the temporary table, you can do it by using the statement as given below;

DROP TABLE #PersonDetails

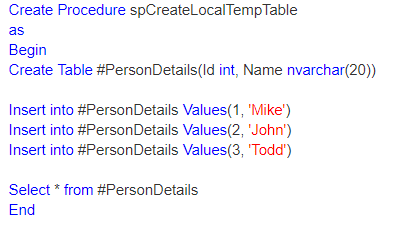

Create a table in a stored procedure

Whenever a temporary table creates inside the stored procedure. Then, it gets drop automatically upon the completion of stored procedure’s execution. A stored procedure is given below, creates #PersonDetails temporary table, populates it. Finally, it returns all the data records and removes the temporary table immediately after the completion of the stored procedure execution time period.

Yes, It is also possible for other connections, to create a local temporary table with the same table name. So, let’s understand through a real example. There are User1 and User2, both users create a local temporary table with the same table name #PersonDetails. Now, further expand the “Temporary Tables” folder in the tempdb database window, you will get two tables with name #PersonDetails and few random number at the end of the table name. A common exception between, User1 and User2 local temporary tables, SQL server defines the random number at the end of the temporary table name.

Global Temporary Tables

Global Temporary tables name starts with a double hashtag (“##”). These temporary tables are visible to all the connections of the SQL server and it only gets deleted when the last connection referencing the table is closed in the database.

How to Create a Global Temporary Table

Global Temporary Table contains prefix number with two pound (##) symbols. EmployeeDetails Table is the global temporary table. To create this temp table use this following script as follows;

Create Table ##EmployeeDetails(Id int, Name nvarchar(20))

Furthermore, multiple users over across multiple connections take local temporary tables with the same name. But, a global temporary table name contains the only unique name, and if you take the name of the global temporary table in the window object explorer, you may find no random suffix number at the end of the table name.

Difference: Local vs Global Temporary Tables

There are some major differences about Local Temporary Tables and Global Temporary Tables are follwoing as;

1. Local Temporary tables always contain prefix number with single pound (#) sign, whereas global temporary tables contain prefix number with 2 pound (##) sign.

2. SQL Server gives few random numbers at the end of the local temporary table name, whereas this can not be possible with global temporary table names.

3. Local temporary tables only visible to that particular session of the SQL Server which create itself, Global temporary tables give the visibility to all the connections of the SQL server.

4. Local temporary tables automatically drop or delete when no longer use of it, whereas Global temporary tables only delete when the last connection referencing the table is closed.

Temporary Tables Limitations

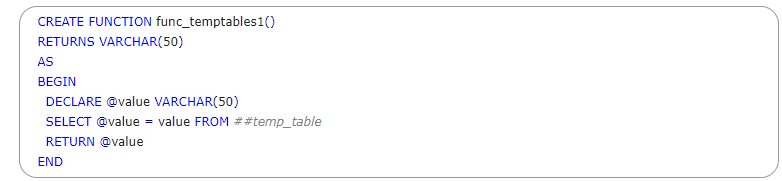

In temporary tables, you can not have any access to global and local temp tables in functions as follows;

So, if you run this script of a query, database throws an error statement like as follows;

Table Valued Parameters in Tempdb

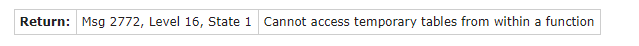

The Table-Valued Parameter (TVP) is a special kind of variable, that usually extends to use it. When table variables pass the parameters, then the table materialize in the folder “TempDB” in object explorer window in the system database as a table variable and pass by the reference value and a pointer value to the table in the tempDB.

TV parameters are being in use since SQL Server 2008 to send several rows of the data value to a T-SQL via sp_ExecuteSQL Any particular data value to the programmer, that can be used within T-SQL command as well as in the client application program. Therefore, they are good for sending unique client tables to the T-SQL server.

Table- Valued in Transact-SQL

you also can declare table-valued variable functions in T-SQL, also you can insert data into them, and then pass the variables as table-valued parameters to stored procedures. Table-valued parameters only pass as read-only. You cannot perform Update, Delete and Insert commands cannot be done in this situation.

So, let’s create a User-Defined Table Type and which defines the structure of the table. Here is a simple example of their use in T-SQL.

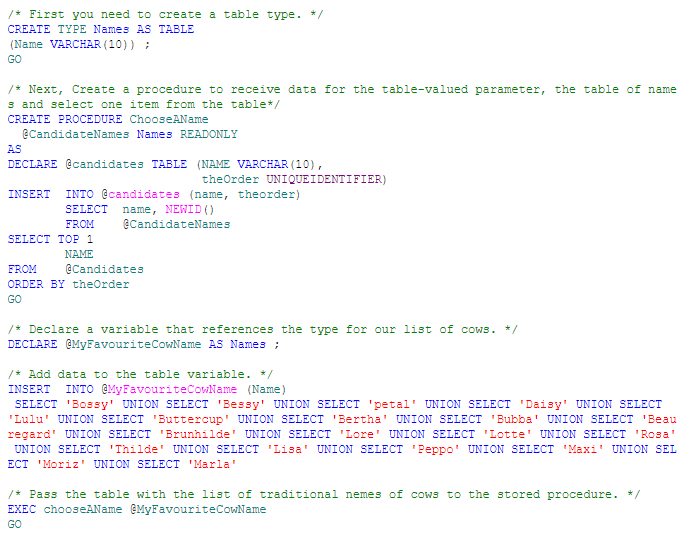

So, as with Table Variables, the table-valued parameter put a clean stoppage to exist. Once it comes as out of scope and the type of definition still remains the same until it is explicitly dropped. Seems like as Table Variables, they do not require locks when the data populates with their client application, and statistics cannot maintain on columns functions of table-valued parameters. Also, you are not able to use a table-valued parameter with SELECT INTO or INSERT EXEC commands. But if you expect, then a table-valued parameter can be in the FROM clause or select INTO in the string INSERT EXEC or stored-procedure.

The table-valued parameter resolves the common problem of “want to pass a local variable to dynamic variable in SQL” that specifically executes with a .sp_Execute SQL extension.

Tempdb: A Temporary Database

Temporary tables and table variables both stored in the tempdb database. The main difference of TempDB, that any objects such as tables clear out on startup. Because tempdb always uses the simple recovery model schema in the database. Once the transaction completes then it makes the clearance from the log on the next TempDB database checkpoint. As a result, temporary tables seems like to remain cache memory, but only if they use simultaneously same as with a base table. TempDB database generates a system which calls for a temporary object reuse, which works with a small portion of cache memory in temporary objects with the plan if it maintains sufficient memory. Hence proved, that temporary objects always exist only in memory.

DBCC CHECKDB in SQL Server

DBCC supports databases that contain memory-optimize tables but validation only starts with disk-based tables. It always checks physical and logical data optimization and data integrity of all the database objects

There are some main checkpoint as follows;

- DBCC CHECKALLOC, which runs only in the database. It only checks and verifies data consistency of disk space allocation data structures in a specified database.

- DBCC CHECKTABLE, which runs on every base table and tables view in the database, which always checks the data integrity of all the current pages which are being used and data structures that creates indexes view.

- DBCC CHECKCATALOG, which runs on the database, which always checks catalog consistency in the database.

- It verifies the contents of every indexed view in the database. It validates low-level link consistency for table metadata and file administrator system directories.

- Although, as part of database backup and recovery, a CHECKSUM digit validation is done for files in memory-optimize filegroups in the tempdb database.

- DBCC contains a small limitation, that when you run the statement DBCC CHECKDB, it takes time to get the output for a large number of records containing the database.

Example with DBCC CHECKDB

Let’s understand with an example. Moderate tables in queries described as ‘hashes’, ‘sorts’ and ‘spools’ materialize in TempDB database with those required for many ‘physical’ operations which execute SQL commands. This may use with Snapshot isolation, Multiple Active Results Sets (MARS), triggers and online-index-build.

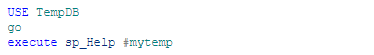

If you make an interest to see what is going on, you can just view the tables in TempDB, and you can use sp_help work on temporary tables. it works only if you call them from TempDB.

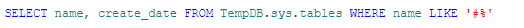

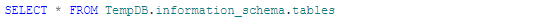

Also, you may find them in the database system views of TempDB without swapping databases.

and the information Schema

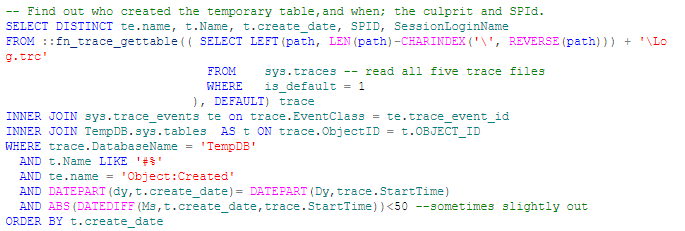

Yes, you may find what process, and the user holds with temporary tables in TempDB and refuses to give up space

In this situation, user-defined datatypes cannot use in temporary tables until the datatypes exist in TempDB.

SQL Server DBCC CHECKDB Options

There are options to use with DBCC CHECKDB as follows;

- NOINDEX – It specifies that non-clustered indexes must not be performed for user tables in the database. This decreases the complete execution time. NOINDEX does not affect stored tables in the database because data integrity checks always perform on system table indexes.

- NO_INFOMSGS – It gives suppresses all information messages in the database.

- PHYSICAL_ONLY – It considers check limit of the physical structure of the page, and record headers. Physical_only checkdb provide a small check of the physical data consistency in the database, and it also finds torn pages, checksum failures, and common hardware failures.

- TABLOCK – This uses the locks instead of using an internal database snapshot checks. This involves a short-term exclusive (X) lock on the database. TABLOCK checkdb uses to make run fast on a database in the situation of heavy pressure load but it also decreases the availability of the database when DBCC CHECKDB is in running process.

- DATA_PURITY – This option is used to check the database for column number values that shows either not valid or out-of-range. For example, DBCC CHECKDB finds columns with date name and time values which is more larger than or less than the accepted range for the DateTime data type in the database.

How to repair a SQL Server database

Repair can be done in some cases that if you did not make the backup table or database and if you lost some confidential data from the database, in such cases, you can repair your data back into the database. To get the back up your data use DBCC CHECKDB with a repair option. There are the repair options that are available to use. These options may or may not work.

- REPAIR_ALLOW_DATA_LOSS – This function only repairs all reported errors which generated by the database. This repairs may lead to some data loss but you cannot confirm.

- REPAIR_REBUILD – This function only performs repairs that do not contain any possibility of data loss. This also includes quick repairs.

SQL Constraints

Constraints define the specified rule to enforce the data columns in the table. Constraints define accuracy and reliability of the data in the database. It can be on column level and table level. The column level constraints only apply to one column, on the other hand, the table level constraints apply to the whole table in the database.

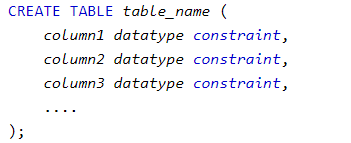

There are constraints syntax as follows;

Types of SQL Constraints

There are seven types of the SQL constraints as follows;

NOT NULL – This SQL constraint define a column that cannot contain a NULL value.

UNIQUE – This SQL Constraint defines that all values are different in a column.

PRIMARY KEY – This is the combination of a NOT NULL and UNIQUE. It identifies uniquely each row in a table.

FOREIGN KEY – This SQL Constraint identifies uniquely a record in other tables.

CHECK – This SQL constraint defines that all values satisfy a specific condition in a column name.

DEFAULT – This SQL constraint sets a default value for a column name when none of the value mentioned.

INDEX – This SQL constraint uses to create and fetch data from the database in a quick manner.

SQL NOT NULL Constraint

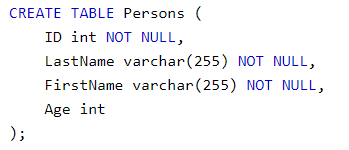

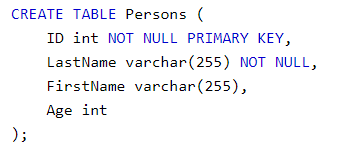

As we know, by default a single column in the table contains a null value, but when we talk about Not Null constraints which define a column that does not contain null values in the table. There are some fields in the table, that contains a non-null value that means you cannot perform any update or insert a record without adding a particular value to the fields in the database.

Let’s go through the simple syntax of Not Null SQL constraints, it ensures that “ID”, “LastName”, and “FirstName” columns will NOT accept Null values:

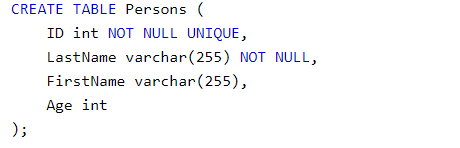

SQL Unique Constraint

This SQL Constraint defines that all values are different in a column. UNIQUE constraints provide a complete uniqueness for a particular column or set of columns in a different manner. A Primary key constraint automatically contains UNIQUE constraint in the table. Although, you can take many UNIQUE constraints on one table. Let’s understand with a syntax example for creating the SQL Unique constraint as follows;

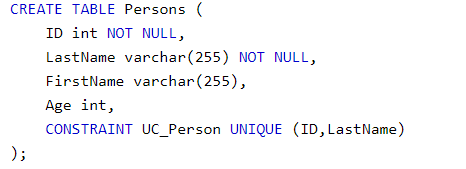

To create a UNIQUE constraint on multiple columns, use the following SQL syntax as follows:

To drop the Unique constraint use the syntax for the same;

Alter Table Persons DROP CONSTRAINT UC_Person.

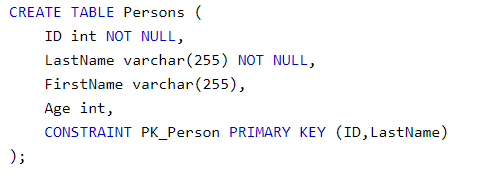

SQL Primary Key Constraint

SQL Primary key constraint always identifies each record uniquely in the database. A primary key can contain uniques key but it can not contain null values in the table, and a table only can have one primary key per table but it can have more than a unique key. Let’s understand with a syntax of primary key constraint.

As you can see, we put the primary key on “ID” column in the table “Persons”

In the example above there is only one primary key which is (PK_Person). Although, the value of the primary key is the combination of two column (ID and LastName).

To drop Primary key constraint use this following syntax as follows;

Alter Table Persons DROP CONSTRAINT pk_Person

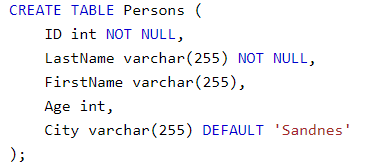

SQL Default Constraint

This SQL constraint sets a default value for a column name when none of the value mentioned. The default value adds to all new records if no different value is specified in the table. Let’s understand with a syntax of default constraint as follows;

Let’s set a DEFAULT value for the column “City” in the table “Persons”

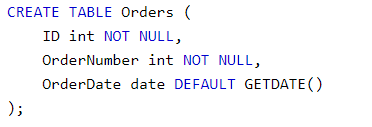

The DEFAULT constraint also uses to insert system values, by using this GETDATE() functions.

To drop the default constraint use the following syntax as follows;

ALTER TABLE Persons ALTER COLUMN City DROP DEFAULT

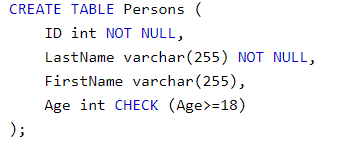

SQL Check Constraint

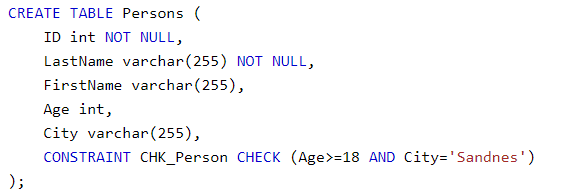

This SQL constraint defines a specific condition in a column name. If CHECK constraint defines in a particular column then it allows only specific values for a particular column. If CHECK constraint defines on a table then limit of the certain values in single columns which are based on values in other columns.

Let’s understand with a syntax of SQL check constraint on the “Age” column name and the table “Persons”, it checks only that you cannot have any record values or a person which is below 18 years in the table “Persons”.

To allow Check constraint on multiples columns in the table as follows;

To drop Check constraint use the following syntax as follows;

ALTER TABLE Persons DROP CONSTRAINT CHK_PersonAge

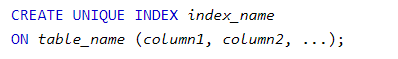

SQL Index Constraint

This SQL constraint uses to create and fetch data from the database in a quick manner. Here, Fron end user cannot see these indexes, they just use it for speed up searches. But there is a limitation which is, update in the table with indexes consume more time. So, create indexes on columns in the table that search very fast. Let’s have a look at a syntax as follows;

To drop an Index use this;

DROP INDEX table_name.index_name

Conclusion

In this article, I hope you realize the common differences between the different types of temporary tables which is supported by Microsoft SQL server. Temporary tables really help to reduce your workload when you try to put your data into temp tables. In temporary tables, there are DBCC Checkdb and Table-Valued Parameters, which are the most common and most powerful entity in the SQL database while repairing, searching and prevent data loss.

Moreover, the Unique key constraints are the most popular and commonly used SQL constraints which help you in making the less script and are very useful for result accuracy.

- Difference Between SQL and MySQL - April 14, 2020

- How to work with Subquery in Data Mining - March 23, 2018

- How to use browser features of Javascript? - March 9, 2018